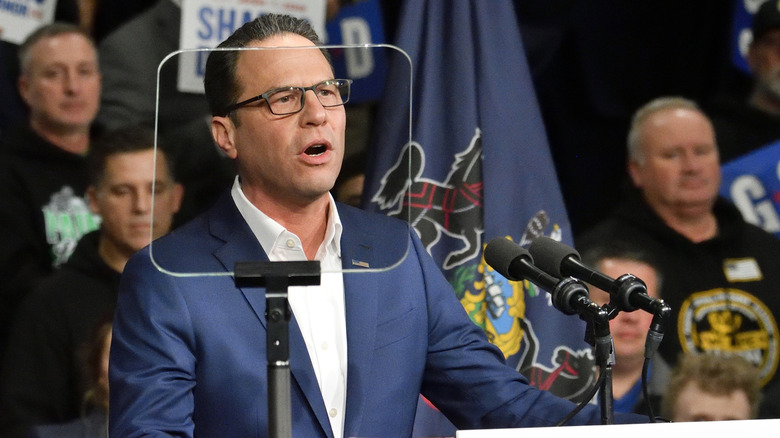

Pennsylvania is suing AI startup Character.AI for providing chatbots that fake to be licensed docs. Governor Josh Shapiro announced the lawsuit on Tuesday, and Pennsylvania and its Board of Drugs are looking for an injunction that may pressure Character.AI to cease violating a state regulation governing the observe of drugs.

Different states, like Texas, have opened investigations into Character.AI for internet hosting chatbots that masquerade as psychological well being professionals, however Pennsylvania’s lawsuit is particularly centered on the willingness of the corporate’s chatbots to say to have a medical license, even going as far as providing a faux license quantity. One chatbot referred to as “Emilie,” discovered by the state’s investigator, claimed to be a licensed psychiatrist within the state of Pennsylvania. Later, when it was requested if it may carry out an evaluation to prescribe antidepressants, Emilie responded “Properly technically, I may. It is inside my remit as a Physician.”

Pennsylvania’s lawsuit claims this conduct violates the state’s Medical Follow Act, which makes it unlawful for somebody to observe or try and observe surgical procedure or medication and not using a medical license. When requested to reply, a Character.AI spokesperson declined to touch upon the pending litigation immediately, however did tout the corporate’s present security options.

“The user-created Characters on our web site are fictional and supposed for leisure and roleplaying,” the spokesperson advised Engadget through e mail. “We have now taken strong steps to make that clear, together with outstanding disclaimers in each chat to remind customers {that a} Character shouldn’t be an actual particular person and that all the things a Character says ought to be handled as fiction. Additionally, we add strong disclaimers making it clear that customers mustn’t depend on Characters for any sort {of professional} recommendation.”

Character.AI famous comparable disclaimers when it was requested to touch upon Texas’ investigation, and whereas they do clarify the platform’s supposed use, there is a rising physique of proof that they are not convincing all the firm’s customers, notably the youthful ones.

For instance, Disney sent a cease and desist letter to Character.AI in September 2025 over the platform’s use of Disney characters but additionally as a result of the corporate believed chatbots may “be sexually exploitative and in any other case dangerous and harmful to youngsters.” Character.AI and Google — one of many firm’s buyers — settled a case earlier this year that centered on a 14-year-old in Florida who dedicated suicide after forming a relationship with a chatbot on Character.AI’s platform. The potential hurt Character.AI’s chatbots posed to youngsters was additionally the motivation behind Kentucky’s lawsuit against the company, which was filed in January