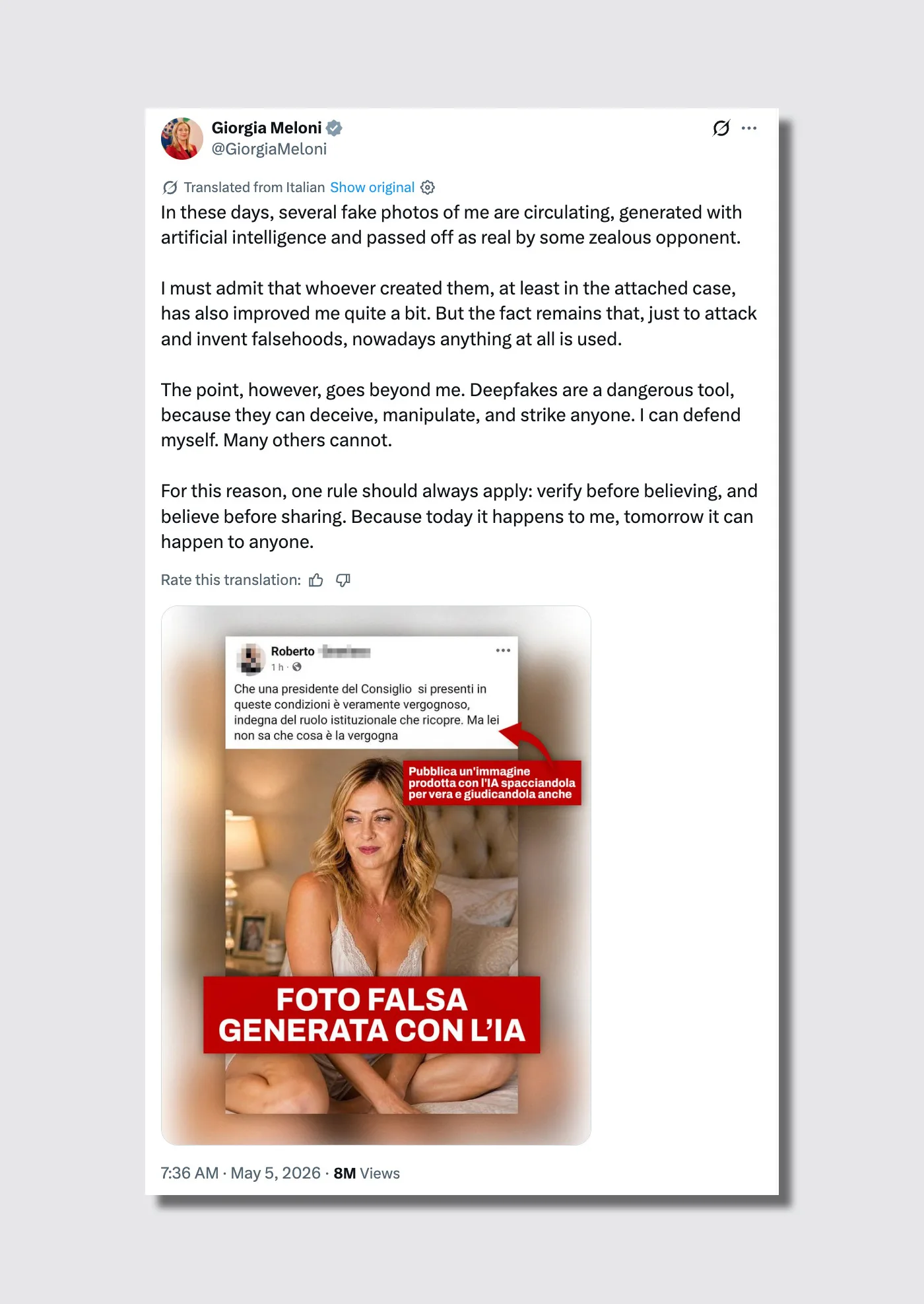

Yesterday, Giorgia Meloni posted to X an AI-generated picture of herself carrying solely lingerie. The Italian prime minister revealed the picture to warn others about how simple it’s to create perfectly believable images and videos. Her warning: By no means imagine something you see with out completely fact-checking it.

In spite of everything, we live in the end of reality.

“Deepfakes are a harmful device, as a result of they’ll deceive, manipulate, and hit anybody,” Meloni mentioned on X. “I can defend myself. Many others don’t.”

She is correct, regardless that the picture will not be technically a deepfake. It’s a completely AI-generated picture that options her face. In contrast to early deepfakes, which merely switched the face of 1 human in a base supply picture with the face of one other human, generative AI can use totally different elements—like actual faces, our bodies, locations, voices, and sounds—to create a 100% new artificial media.

This course of makes its true nature just about, if not fully, undetectable: Since you’ll be able to’t reverse-search and match the bottom picture to an unique supply on the net, you possibly can imagine it’s unique (and actual).

Meloni has already sued two men for making a deepfake porn video of her in 2024. This time round, she joked that the fakes look “rather a lot” higher than she does and posted the picture as a really 2026 PSA. “This is the reason a rule ought to at all times apply: Examine earlier than believing, and imagine earlier than sharing. As a result of at this time it occurs to me; tomorrow it could possibly occur to anybody,” she wrote.

Meloni confirmed braveness by placing herself on the market, however extra have to be finished than doling out recommendation. We’re manner previous the purpose of training. The world wants motion.

Generative AI poses an existential hazard to humanity. It could actually weaponize our psychological biases, successfully destroying our shared sense of goal actuality.

Simply take a look at what’s occurred over the previous couple of months. There’s Jessica Foster, an AI-generated, pro-Trump military influencer who amassed one million followers in simply three months to funnel males towards an grownup fetish web site (her account was later deleted from Instagram). And regardless that Foster’s digital persona was riddled with apparent rendering glitches and absurd eventualities, in contrast to Meloni’s pictures, her followers willfully ignored them as a result of the mirage completely glad their ideological fantasies.

When a reputable video was launched proving that Israeli Prime Minister Benjamin Netanyahu was alive following assassination rumors, the web—aided by hallucinating AI chatbots—immediately and falsely dismissed the footage as a deepfake. Even after impartial analysts and fact-checkers supplied irrefutable proof that the video was genuine, the proof didn’t sway those that most popular their very own conspiracy theories.

Each politician should act now

Trapped on this unreal dystopia the place the perimeter of goal fact has been fully vaporized by tech giants, society wants greater than an X submit. Public consciousness and academic campaigns are now not ample to fight the massive human and economical cost that this is already causing.

The one remaining exit technique to avoid wasting our shared actuality is for world governments to aggressively intervene and drive know-how firms to undertake {hardware} and software program that may authenticate actual photographs, movies, and audio past any shadow of a doubt.

In March, a group at ETH Zurich proposed the one answer that feels critical sufficient for the dimensions of the risk: sensors that cryptographically signal a picture on the actual second that mild and audio hit them.

In contrast to at this time’s programs, which stamp authenticity by way of the system’s foremost processor—leaving them susceptible to interception and tampering—this design locks verification instantly into the act of seize itself.

In plain phrases, it might make it vastly tougher to go off artificial media as actual, as a result of the proof of authenticity could be born contained in the {hardware}, not added afterward by software program that may be spoofed. That manner, individuals can search for the “stamp of fact” in any media revealed in all places, from publications to social networks.

And something with out that stamp, like Meloni says, must be mechanically doubted and disregarded.

States should additionally act to present instruments to their residents to take down any picture that makes use of their faces, by enabling legal guidelines just like the Digital Millennium Copyright Act within the U.S. However quite than forcing common individuals to copyright themselves, they need to be capable to simply take down any unauthorized AI variations of themselves in any public net or social community.

Proper now, solely the Danish authorities has finished this. In an effort to supply safety towards AI cloning of its residents, final yr the nation rewrote its legal code to ensure that residents strictly personal the rights to their organic faces and pure talking voices.

Danish Tradition Minister Jakob Engel-Schmidt summed it up completely again then: “Human beings could be run by way of the digital copy machine and be misused for all kinds of functions, and I’m not keen to simply accept that.”

The Meloni case, certainly one of tens of millions, reveals as soon as once more that the Danish minister for tradition is 200% proper when he declared the urgency of the legislation his authorities handed. We have to cease this downside decisively with these instruments and any others that lawmakers and engineers can give you.

Faux pictures—so long as they’re inside present authorized limits—can coexist with actuality simply fantastic. However the tech giants profiting off of this downside need to act now.