Finally, Seedance 2.0 is now out there within the U.S. This extraordinary generative video AI model made by TikTok’s Chinese language guardian firm ByteDance is able to creating high-definition video so sensible that it’s shattering our visual truth into a billion pieces.

However hey, who cares? If we’re happening in flames as a species, let’s have enjoyable placing dumb movies collectively. I’ll let you know find out how to do it on this brief information on find out how to make Seedance 2.0 movies. Time to roll up for a Magical Mystery Tour. Step up proper this fashion!

Signing up for Higgsfield

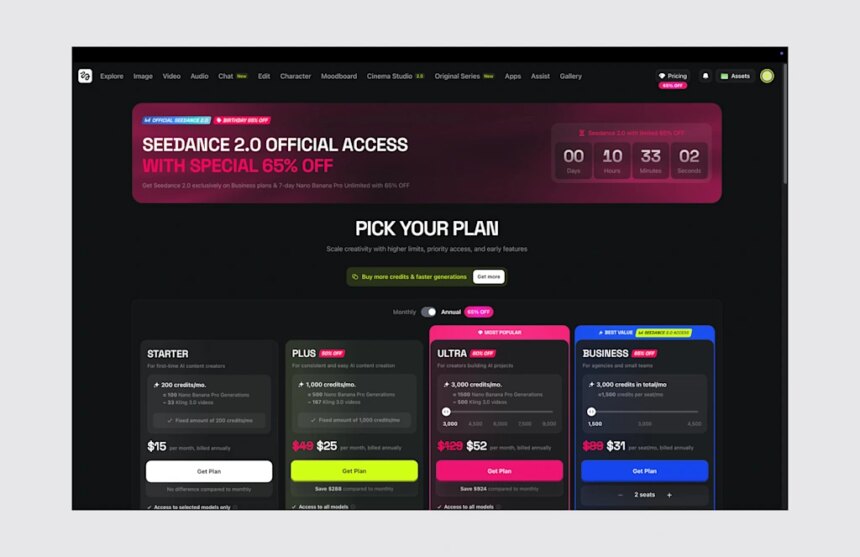

To make use of Seedance 2.0, you first must sign up for Higgsfield. This platform is actually a unified digital workspace that wrangles a number of artificial intelligence video engines—like Seedance, Veo, and Kling—right into a single interface. As an alternative of paying for a dozen disjointed subscriptions, you get a centralized dashboard to make use of all of them.

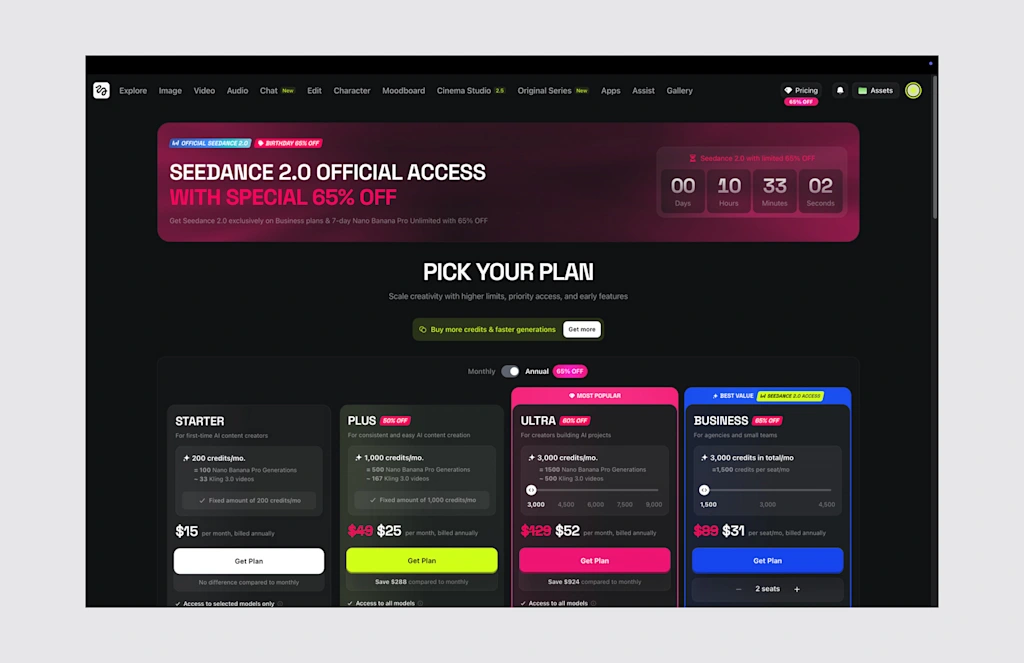

To get began, you need to create an account and subscribe to either the Business ($49 billed monthly) or Ultra ($84) tier. In the event you occur to dwell outdoors the U.S. or Japan, put together to leap by way of one further hoop by verifying a company e-mail tackle to unlock the mannequin.

Producing content material on this platform runs by way of the everyday credit score economic system. A typical 5-second clip rendered at 720p decision burns 30 credit. Every credit score prices from $0.04 to $0.08, relying in your subscription plan. To “have fun” the Finish of Actuality as We Know It, the corporate is presently providing 65% off at sign-up supply to minimize the preliminary monetary blow.

How Seedance 2.0 works

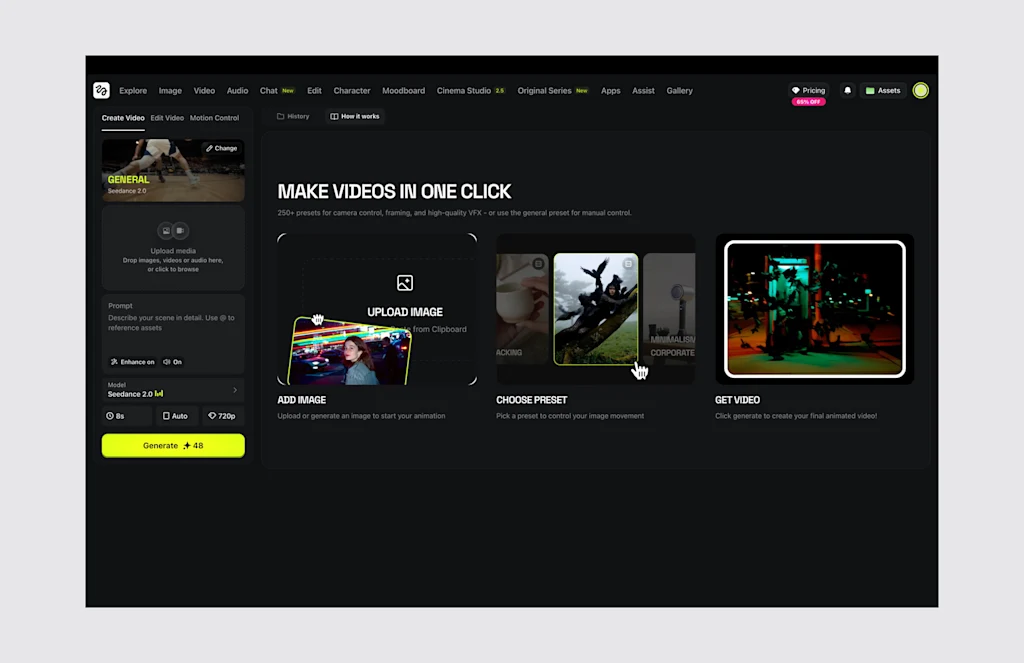

As soon as you’re in, you’ll be able to choose Seedance 2.0 from the primary dropdown menu. Seedance 2.0 is multimodal, which implies it accepts as much as 12 simultaneous media inputs. You’ll be able to add 9 static reference photographs, three distinct video snippets capped at 15 seconds every, and three audio tracks. It then spits out video photographs (at 480p, 720p, 1080p or 2K decision with upscaling) that max out at 15 seconds per era.

The mannequin places all the pieces collectively, developing the visuals, build up the characters (and sustaining coherent look between photographs), shifting the digicam per your directions, and syncing sound and speech at the very same millisecond. The result’s flawless lip-syncing and correct spatial noise while not having a devoted post-production cross (though professionals will truly combine the outcomes into devoted modifying software program like Adobe Premiere).

It is vitally easy to run, so go for it and provides it a spin: Add your reference recordsdata into the workspace and sort out a plain-language description of what you need to see. After you hit generate, you’ll get your clip. If the platform defaults your output to 480p or 720p to save lots of processing time, you’ll be able to dive into the superior settings to power a better pixel depend, or just run the draft by way of their built-in upscaling software to push it to a crisp 1080p or 2K end. In the event you want an extended film, you’ll be able to click on “prolong” to chain a number of 15-second blocks collectively indefinitely.

Craft the proper immediate for Seedance 2.0

For finest outcomes, nevertheless, it is advisable comply with some fundamental guidelines for writing prompts. Nothing onerous, however it’s essential to use the next construction. First, begin by explicitly defining your topic—their clothes, id, and the encompassing surroundings—earlier than shifting on to the particular motion and the way lengthy it ought to take.

From there, you dictate the precise digicam framing, equivalent to a “sluggish dolly-in,” adopted by the general cinematic type and any strict constraints. Attempt to use constructive directions to explain what ought to exist on display screen, completely avoiding destructive instructions.

Specificity is your finest weapon in opposition to the AI’s pure tendency to hallucinate (though this mannequin is basically good at understanding the world). That is particularly vital for scenes with a number of characters that will get confused. As an alternative of simply attempting to regulate the “man,” speak in regards to the “blonde man in a pink tutu” so the engine doesn’t get confused.

The neatest workflow right here is to generate some brief five-second display screen exams first. If the ensuing footage is barely off, tweak solely a single variable in your textual content immediate and regenerate, methodically isolating the issue somewhat than rewriting the complete situation from scratch repeatedly. As soon as you’re completely satisfied, construct on that have to create your remaining shot.

Now go on, take out your bank card, and have enjoyable destroying the planet and our brains!