Whispers adopted her offline. On-line, the abuse imploded, unchecked: feedback, ridicule, shares, screenshots. She had by no means consented to any of it. That hadn’t stopped anybody.

Inside minutes, hundreds had seen the content material. Inside hours, hundreds of thousands.

The nightmare had solely begun.

Days handed earlier than platforms responded. By then, the photographs had been seen, save, and replicated. She was left asking: Who do I report this to? Will anybody consider me? Will the individuals who did this ever face penalties? Or will the blame land on me?

That is the fact for hundreds of girls and ladies each single day. AI deepfakes are destroying actual lives and justice stays out of attain for many survivors.

Her story might be yours.

Deepfake abuse is the sharp fringe of a wider sample of digital violence concentrating on girls and ladies. It’s gendered and it’s escalating. Proper now, the techniques designed to guard individuals are failing, whereas the instruments to trigger hurt turn into cheaper, sooner and simpler to make use of every single day.

Right here’s what you want to know:

What’s deepfake abuse and the way frequent is it?

Deepfakes are photographs, audio or movies manipulated by synthetic intelligence (AI) that make it seem somebody mentioned or did one thing they by no means did.

The know-how itself isn’t new, however its weaponisation towards girls and ladies is a more recent phenomenon, and it’s accelerating quick.

- deepfake pornography made up 98 per cent of all deepfake movies on-line, and 99 per cent depicted girls, in accordance with a 2023 report.

- deepfake movies have been an estimated 550 per cent extra prevalent in 2023 than in 2019

- the instruments to create them are extensively accessible, normally free, and require little or no technical experience

- as soon as posted, AI-generated content material could be replicated endlessly, saved to non-public units, and shared throughout platforms, making it almost unattainable to completely take away

Why survivors don’t report and what occurs after they do

Underreporting is without doubt one of the greatest obstacles to accountability. For survivors who do come ahead, the justice system typically turns into one other supply of trauma.

- Survivors are requested repeatedly to view and describe abusive content material with police, legal professionals and platform moderators whereas typically dealing with questions like, “are you certain it’s not actual?” or “did you share intimate photographs earlier than?”

- If a case reaches courtroom, their clothes, relationships and previous behaviour go below the microscope, not the perpetrator’s

- Hurt doesn’t keep on-line, in accordance with a UN Women survey, which discovered 41 per cent of girls in public life who skilled digital violence additionally reported dealing with offline assaults or harassment linked to it

Why deepfake creators hardly ever face justice

Regardless of the size of hurt, prosecutions are uncommon, platforms routinely fail to behave and survivors are sometimes re-traumatised after they attempt to search assist. Right here’s why:

The regulation hasn’t caught up as lower than half of nations have legal guidelines that deal with on-line abuse and even fewer have laws that particularly covers AI-generated deepfake content material

- most “revenge porn” or image-based abuse legal guidelines have been written earlier than deepfakes existed, leaving gaping loopholes

- in lots of international locations, deepfake porn or AI-generated nude photographs fall into authorized gray areas

- survivors are uncertain whether or not the abuse is even unlawful and whether or not perpetrators could be prosecuted

Enforcement is lagging as a result of even when legal guidelines exist, investigators want digital forensics experience, cross-border coordination and platform cooperation to construct a case whereas most justice techniques don’t have satisfactory assets for any of those

- proof disappears quick as content material spreads and copies multiply whereas perpetrators cover behind anonymity or function throughout jurisdictions

- platforms are gradual or unwilling to share information with regulation enforcement, particularly in cross-border instances

- digital forensics backlogs imply instances stall earlier than they even get began

Tech platforms are failing survivors as they’ve lengthy hidden behind “middleman” standing to keep away from duty for user-generated content material.

What should occur now

Whereas there are a selection of countries and areas taking motion (see textual content field beneath), stopping deepfake abuse requires pressing, coordinated motion from governments, establishments and tech platforms.

Listed here are 5 issues that have to occur:

1. Legal guidelines that truly cowl deepfake abuse

Governments should move laws with clear definitions of AI-generated abuse and specializing in consent, strict legal responsibility for perpetrators, fast-track removing obligations for platforms and cross-border enforcement protocols.

2. Justice techniques that may examine and prosecute

Regulation enforcement wants coaching, assets and devoted capability to gather and protect digital proof whereas digital forensics backlogs are addressed, with worldwide cooperation frameworks changing into quick, practical and match for function.

3. Platforms held accountable

Tech corporations should be legally required to proactively monitor for and take away abusive content material inside obligatory timelines, cooperate with regulation enforcement and face actual monetary penalties after they fail to behave.

4. Actual assist for survivors

Educated, trauma-informed regulation enforcement and authorized professionals and free authorized support must be accessible.

5. Schooling that stops abuse

Digital literacy, together with consent training, on-line security, and what to do when experiencing abuse, wants to start out younger and attain everybody as prevention is as vital as prosecution.

UN Women warns this isn’t a distinct segment web downside: “It’s a international disaster.”

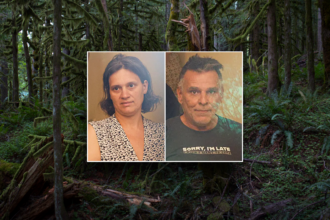

- in a latest high-profile case, UK journalist Daisy Dixon found AI-generated, sexualised photographs of herself on X in December 2025, created utilizing the platform’s personal Grok AI software; it took days for the platform to geoblock the operate, whereas the abuse saved spreading

- deepfake abuse can function on-line catalyst for so-called “honour-based crimes” in sure cultural contexts, the place perceived breach of honour norms on digital platforms may end up in excessive bodily violence towards girls, and even loss of life

- more than half of deepfake victims in the United States of America contemplated suicide, in accordance with latest analysis

In the meantime, a handful of jurisdictions are beginning to act:

- Brazil amended its prison code in 2025, rising penalty for inflicting psychological violence towards girls utilizing AI or different know-how to change their picture or voice

- the European Union synthetic intelligence (AI) act imposes transparency obligations round deepfakes

- The United Kingdom’s On-line Security Act prohibits sharing digitally manipulated express photographs, however doesn’t deal with the creation of deepfakes and should not apply the place intent to trigger misery can’t be confirmed

- the United States Take It Down Act explicitly covers AI-generated intimate imagery and requires platform removing inside 48 hours