In 1988, a London pre-teen with a penchant for programming and gaming wrote a model of the traditional board recreation Othello—also called Reversi—for his Amiga 500 house laptop. Instructing a chunk of software program to play the sport was an formidable coding mission for somebody so younger.

And with that, Demis Hassabis notched his first achievement within the subject of artificial intelligence.

The Othello-playing app “beat my child brother, who was solely 5 on the time,” Hassabis remembers. “It was an ‘a-ha’ second for me, as a result of I simply thought, ‘Wow, it’s unbelievable that you would be able to make a program that’s inanimate and it might probably go off and do one thing in your behalf.’”

That proved to be a fateful epiphany. Greater than 20 years later, it led to him cofounding DeepMind, the AI startup that did a lot to push the expertise ahead, each earlier than and after its acquisition by Google in 2014. In 2023, Google merged DeepMind with Google Mind, its different extremely productive AI arm, and named Hassabis as CEO of the mixed operation, Google DeepMind. The AI mannequin he oversees, Gemini, is now at the heart of Google products used by billions of people.

Lengthy earlier than the fruits of DeepMind’s work had been all over the place, the corporate was a analysis lab whose early focus was on coaching algorithms to play video games. That didn’t simply join them again to Hassabis’s childhood Othello app. From the very daybreak of AI, researchers have used gaming as a canvas for discovery. For instance, again in 2019, I wrote a few 1960 TV particular that documented IBM’s checkers-playing computer.

Video games are so highly effective as a analysis device as a result of they’re “a microcosm of one thing vital in actual life,” explains Hassabis. “And we get to follow it many occasions in an setting that’s severe, however not severe, in a way.”

Final month marked the tenth anniversary of the capstone to that quest—a history-making second not only for DeepMind, however your entire AI subject. The two,500-year-old Chinese language board recreation Go had been thought-about, in Hassabis’s phrases, “the Mount Everest of recreation AI”—so deep and mystical in its mechanics that for years, computer systems struggled to play it even poorly, not to mention effectively. However from March 9-15 2016, in a match held in Seoul, DeepMind’s AlphaGo software beat Lee Sedol, Go’s world champion, 4 video games to at least one.

The victory reverberated far past the group of obsessives who had puzzled if it was even doable. “Perhaps, trying again on it now, it was the start of what we might contemplate the trendy AI period,” says Hassabis. It was definitely tangible proof that the tech may amaze even the individuals answerable for its breakthroughs. It was quickly joined by different indicators, similar to Google Mind’s June 2017 research paper on “transformers”—the elemental ingredient that will give us generative AI.

AlphaGo additionally marked a transition for DeepMind. As soon as its AI had crushed Go, gaming was quick on apparent Mount Everests to beat, and extra consequential challenges beckoned. In 2018, DeepMind unveiled the primary model of AlphaFold, its algorithm for predicting protein constructions. That breakthrough’s transformative implications in areas similar to drug discovery and supplies analysis impressed the creation of Isomorphic Labs, a brand new startup inside Google’s mother or father firm Alphabet, and led to Hassabis and DeepMind distinguished scientist John Jumper sharing the 2024 Nobel Prize in Chemistry.

Immediately, Google DeepMind’s website displays its wide-ranging analysis efforts, from predicting weather to error-correcting quantum computers to understanding how dolphins communicate. However Hassabis doesn’t discuss video games like they’re a musty a part of his previous. Certainly, he’s as engaged and proud speaking in regards to the lengthy street that led to AlphaGo’s huge win as when discussing Google DeepMind’s present actions. Gaming simply occurred to be the primary kind of synthetic intelligence that captured his creativeness. What he discovered alongside the way in which stays as related as ever.

“It was apparent to me from 16, 17 years previous that AI was what I used to be going to do with my profession,” he says. “And, if it may work, the largest factor of all time.”

From chess to Pong to Go

By the point Hassabis tackled Othello on his Amiga, he was already an previous hand at board-game wizardry. At 4, he took up chess. At eight, he’d earned sufficient enjoying it competitively to purchase his first laptop. At 13, he grew to become the world’s second-highest rated participant below the age of 14, after the legendary Judit Polgár.

Hassabis credit his time as a chess prodigy with sharpening his expertise at problem-solving, visualization, and considering clearly below stress; it doesn’t appear a stretch to guess that it might need been a boon to his self-confidence as effectively. “There aren’t many issues kids can do the place they’ll compete in opposition to adults on the highest degree after they’re 5 or 6 years previous,” he says. (He recommends chess as a part of college curriculums and nonetheless performs it on-line in the midst of the evening as “a health club for the thoughts.”)

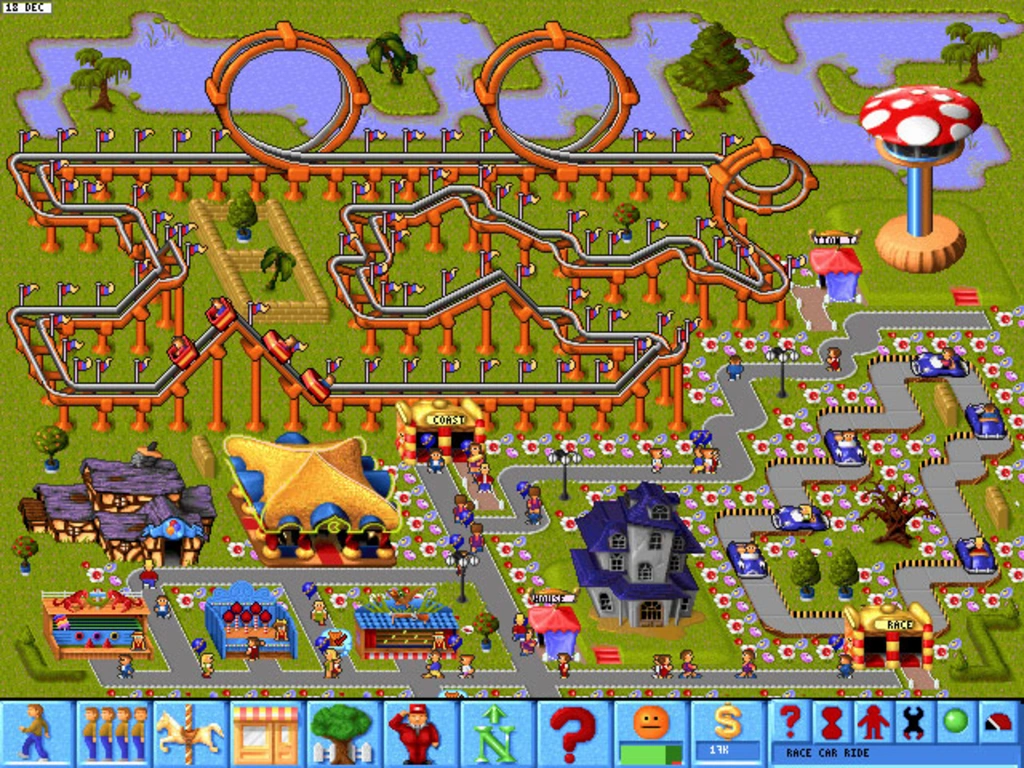

Nonetheless a wunderkind at age 17, Hassabis received an internship at laptop recreation studio Bullfrog after getting into a contest in {a magazine} for Amiga customers. Earlier than lengthy, he’d co-created Theme Park, an amusement-park simulator that bought tens of hundreds of thousands of copies.

Theme Park didn’t simply let gamers select rides. In addition they set costs, employed employees, operated concessions, bought inventory, and in any other case optimized the enterprise to thrive. Not like a board recreation or most laptop video games, it provided completely open-ended play, powered by an algorithm slightly than a hard and fast algorithm.

As Hassabis noticed his creation behave in methods he hadn’t explicitly programmed into it, his thoughts reeled. “The important thing factor was that each time somebody performed the sport, that they had a novel expertise, as a result of the AI would react to how they had been enjoying it,” he remembers. “We obtained letters from children. They despatched screenshots of those wonderful finish states they obtained their theme parks into. And we had no concept you may even do this, although we’d made the sport.”

Sixteen years elapsed between Theme Park‘s launch and DeepMind’s inception. Throughout them, Hassabis earned a BA in laptop science and a PhD in cognitive neuroscience, with extra time within the recreation enterprise sandwiched in between.

When he and his mates Shane Legg and Mustafa Suleyman determined to start out an AI firm collectively, it was with the aspiration—even loftier in 2010 than now—of creating algorithms that might a minimum of match human cognitive skill at typical duties. (Legg referred to as that synthetic basic intelligence, or AGI, a time period your entire subject embraced.) However the cofounders started with a vastly extra manageable mission: training AI to excel at early Atari home video games similar to Pong, Breakout, and Area Invaders.

Not that it was a positive factor on the time. “We would have been 20 years too early,” says Hassabis. “No one knew. And so we needed to strive it.”

The truth that the video video games in query had been ultra-minimalist Nineteen Seventies relics didn’t end in speedy gratification. “It took months to win a single level at Pong, the best Atari recreation,” Hassabis remembers. Finally, although, “We received the sport 21-nil,” he says. “After which we may play all Atari video games after one other yr or so.”

The approach DeepMind used to trounce Pong—deep reinforcement studying—had broad applicability in AI past gaming. Heartened by its progress, the corporate turned its consideration to Go.

Although leaping instantly from a number of the world’s most simple video games to one in all unequalled complexity may sound jarring, it might have been inexorable. Instructing AI to play Go on the highest doable degree had been an irresistibly audacious objective for laptop scientists since the 1970s. It had additionally been on Hassabis’s personal thoughts for 20 years, although he was solely an newbie on the recreation himself.

As a Cambridge undergrad, he’d mentioned AI and Go together with a classmate, David Silver. In 2008, a program Silver had co-created, MoGo, grew to become the primary software program to beat an expert Go participant, albeit whereas competing with the benefit of a handicap. Hassabis was reunited together with his previous buddy when Silver joined DeepMind, the place he labored on the Atari mission and went on to guide AlphaGo’s improvement.

Many years of thought had additionally gone into chess-playing AI earlier than IBM’s Deep Blue beat reigning world champion Garry Kasparov in 1997. However in comparison with Go, chess appeared like Candyland. “In Go, there are 10 to the ability 170 doable board positions—way over there are atoms within the universe,” says Hassabis. That dominated out brute-force approaches similar to programming the AI to deal with each theoretical mixture of items, as IBM had achieved for Deep Blue.

DeepMind ended up coaching a deep neural community with reinforcement studying to solely discover significant strikes for any given format of items on the Go board. Hassabis compares the strategy to infusing the algorithm with human instinct. Besides AlphaGo was able to taking extra information into consideration than even essentially the most gifted and disciplined human participant, offering it with the chance to make choices that felt not simply intuitive, however magical.

That time was confirmed early in recreation two of AlphaGo’s match with Sedol, in a manner that left jaws agape when it occurred and nonetheless resonates at present. For the sport’s thirty seventh transfer—without end after often known as “Transfer 37″—the AI selected a play so sudden that eyewitnesses puzzled if Aja Huang, the DeepMind scientist answerable for transferring AlphaGo’s items on the board, had made it in error.

“Lee Sedol selected that second to go and have a smoke on the balcony,” recounts Hassabis. “He comes again in, and he sees Transfer 37. You see his facial features change, and he’s kind of amazed by it. And bemused, maybe.”

Everybody concerned knew that no human Go grasp would have made Transfer 37. But it surely wasn’t clear till a lot later within the recreation if it had been remarkably sensible or remarkably dumb. Finally, nonetheless, it turned out to be important to beating Sedol—”nearly as if AlphaGo put the piece there for 100 strikes later,” says Hassabis. “Not solely was it uncommon, it was the pivotal transfer to win the sport. That’s what makes it one of many biggest Go strikes of all time.”

Perhaps you’d must be a severe Go aficionado—which I’m not—to really recognize what made Transfer 37 particular. But it surely’s straightforward to get swept up in its drama when watching AlphaGo, the 2017 documentary in regards to the match. It continues to be fodder for courses, presentations, blog posts, and podcasts, making it a powerful candidate for the most-analyzed single resolution made by AI so far.

After all, if Transfer 37 was merely a startling little bit of board-game play, it wouldn’t be so endlessly compelling. By making it, AlphaGo confirmed how AI is able to not simply simulating human thought, however going past it. Attaining that greater state of reasoning was why DeepMind took on Go within the first place.

Subsequent analysis efforts similar to AlphaFold have aimed to catalyze an analogous impact. “The true world’s lots tougher than a recreation,” says Hassabis, however “You want that component of discovering a brand new perception or new construction within the information. That’s what you’re searching for in science.” He provides that Transfer 37-like considering can also be obvious in present Google merchandise such because the Deep Think version of Gemini, which is tuned for functions in science, math, and engineering.

At its finest, human recreation play—be it on a pc, a board, or an athletic subject—is at all times an act of creativity. Hassabis doesn’t hesitate to name Transfer 37 inventive. However mind-blowing although it was, he doesn’t contemplate it equal to human creativity at its most impressed.

“It’s not true out-of-the-box creativity,” he stresses. “As a result of that will be one thing like [telling] the AI system, ‘Give you a sublime recreation that solely takes a couple of hours to play. It takes 5 minutes to study the principles, however a number of lifetimes to grasp. And it’s esoterically stunning as effectively.’”

In different phrases, he says, AI should do greater than conjure up further moments like Transfer 37 to show its inventive bona fides: “It must invent a recreation as deep and as stunning as Go—and clearly, with at present’s techniques, we’re nowhere close to that.” That provides AI researchers at Google DeepMind and elsewhere one other gaming Everest to scale—and we people comforting proof that we stay unbeatable, for now, on a minimum of one significant entrance.